header

News

Translating Human Motion into Robotic Movement

DATE 2026-02-02 16:27:34.0

- WRITER 학무부총장실

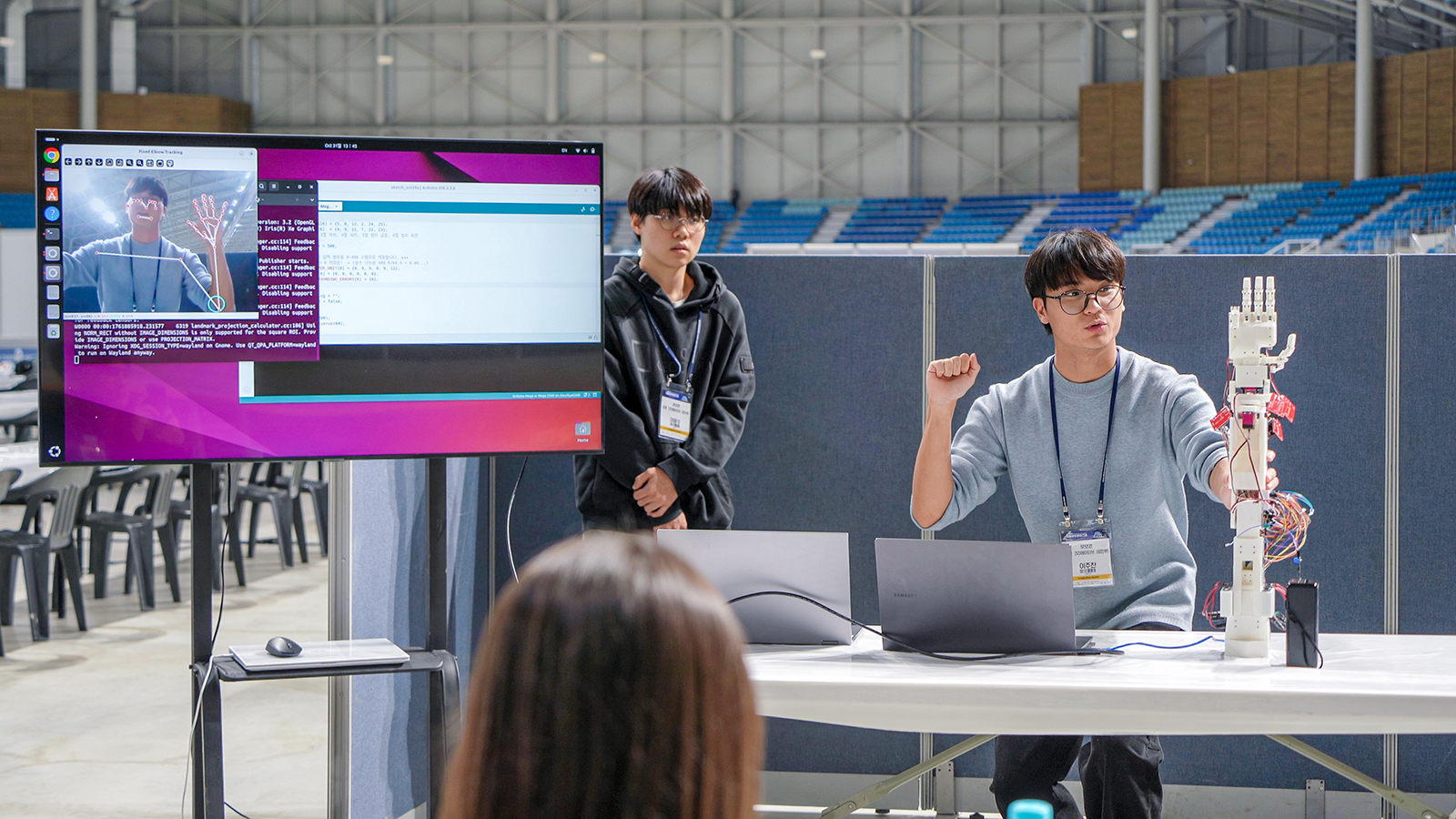

A student duo from the Department of Mechanical Engineering has developed a high-fidelity robotic arm system capable of mimicking human arm and hand movements in real time

As humanoid robots continue to expand across industrial and everyday environments, accurately reproducing human motion—particularly the complex movements of the arm and hand—has emerged as a central challenge in humanoid robotics. In response, the student team “I-Robot,” composed of Students Yoonsoo Lee and Juchan Lee (Mechanical Engineering, ’24), recently received the Director’s Award from the Korean Agency for Technology and Standards at the 20th International Robot Contest (IRC).

The student duo team presented a system titled “A Real-Time Motion-Mimicking Robotic Arm System Using MediaPipe Vision Recognition.” The system captures a user’s movements in real time through a camera and converts them into precise robot control signals. When the user moves an arm, the robotic arm achieves real-time trajectory tracking, reproducing the motion with high fidelity. A key feature of the system is that it relies entirely on open-source, markerless vision technologies rather than costly commercial equipment. By integrating a single-camera setup with sophisticated control logic, the team successfully lowered both economic and technical barriers for advanced humanoid robotics.

The project began with a clear goal: to build a motion-mimicking robotic arm that could be implemented even in low-budget environments. Rather than remaining at the level of theoretical modeling, the students undertook the entire development process themselves, from mechanical design and modeling to circuit configuration and component fabrication. The hands-on development process exposed the team to the technical challenges inherent in humanoid robot control.

To reduce costs, the team actively adopted open-source software and 3D printing technologies. At the same time, they optimized the system’s structure by focusing on core human movements, including elbow flexion, wrist rotation and bending, and finger motion. The resulting system requires no wearable sensors or specialized equipment—only a single camera is needed to accurately capture and replicate human motion.

The system operates on a structured data pipeline that processes human motion step by step, from recognition to control. This close integration of software for motion detection and computation with hardware for physical execution was reflected in the team’s development process. Student Yoonsoo Lee, who was responsible for robotic arm design and fabrication, and Student Juchan Lee, who led vision recognition and control software development, worked very closely from the earliest design stages. By evaluating control logic and physical structure as a single integrated system, they ensured that computational data were seamlessly mapped to physical actuators, translating digital inputs into real-world robotic motion. This collaborative approach played a critical role in completing the robotic arm as a unified hardware–software system.

“The project showed that high-precision robotic systems can be developed even in resource-constrained environments,” said Student Juchan Lee. Student Yoonsoo Lee added, “We envision this technology serving as a practical solution in high-risk remote operations or clinical rehabilitation, bridging the gap between advanced robotics and real-world accessibility.”